From Team Lead to System Designer: what leadership looks like when humans and AI work side by side

AI will not make leadership less important. It will make weak leadership easier to spot and push good leaders to design workflows, guardrails, approvals and trust instead of just chasing tasks.

AI is entering technical organizations not as magic, but as a new layer in the workflow. It can watch, sort, draft, route and recommend, which means leadership is shifting from assigning tasks to designing how humans, software, approvals and exceptions work together. That shift does not stop with managers. Developers, engineers and other specialists are starting to act like team leads for AI agents1 too: assigning work, reviewing outputs, setting boundaries and deciding when human judgment needs to step back in.

AI is not replacing leadership. It is exposing weak leadership.

AI is unlikely to remove the need for leadership.

What it is much more likely to do is expose how much weak leadership was really just task chasing.

For years, a surprising amount of management in technical companies has been part judgment, part coordination and part damage control. Someone chases missing information. Someone translates between engineering and operations. Someone knows which spreadsheet matters, which planner to call and which exception to ignore because it usually looks worse than it is.

That glue work keeps the place running. It also hides weak process design.

Now AI is entering that world. Not as a robot boss. Not as a science-fiction replacement for experience. More like a new layer in the workflow: software that can watch, sort, draft, route, summarize and sometimes trigger the next step.

That is useful. It also forces companies to answer questions they have put off for years.

Who is allowed to decide what?

What needs human approval?

What happens when the system is wrong, but sounds convincing?

Who owns the exception?

Who owns the mess after the exception?

That is why this is a leadership topic.

Not because AI suddenly became wise. Because the old management model was often thinner than people wanted to admit.

The real opportunity is not “more AI”

Most companies do not need another AI pilot. In many cases, they need cleaner workflows.

That part gets buried under all the talk about agents, copilots, assistants, automation and digital labor2. The bigger opportunity is not one more clever tool. It is finally fixing work design that should have been fixed years ago.

Recent work from McKinsey, Microsoft and Deloitte points in roughly the same direction: AI adoption is moving faster than organizational redesign. The technology is spreading. The management model is still catching up.

And most organizations already know where the drag lives.

It lives in bad handoffs.

It lives in unclear approvals.

It lives in rework.

It lives in five people checking the same thing because nobody trusts the upstream input.

It lives in experienced employees carrying too much invisible context in their heads.

AI can help with that. Not magically. But enough to matter.

The manager’s job is moving up a level

The old question was simple: Who owns the task?

That is still useful. But it is no longer enough.

Once AI starts doing part of the watching, sorting, drafting or recommending, the more important question becomes: How should this system work?

That is a different kind of leadership.

It is less about assigning work item by item and more about designing the rules around the work. What is automated. What is assisted. What stays recommendation-only. What needs sign-off. What gets logged. What gets escalated. What gets reviewed a week later, when people can admit what really happened.

Where AI is allowed to route work, trigger actions or materially shape decisions, you are redesigning authority whether you use that language or not.

And this shift does not stop with formal managers.

In many technical teams, individual contributors are starting to take on a kind of frontline leadership too. A software developer working with several AI agents is no longer just writing code. They are assigning subtasks, reviewing outputs, rejecting weak work, setting boundaries and deciding when human intervention is needed. In practice, that starts to look a lot like being a team lead for digital workers, even if nobody changes the title on the org chart.

That matters because it changes where leadership sits. Some of the first real managers of AI systems will not be VPs. They will be developers, engineers, analysts and operators who learn how to direct, review and contain machine-driven work before the organization fully catches up.

Leadership teams usually do not notice any of this until the first uncomfortable moment.

The planner says, “I’m not taking a line down because the model flagged a pattern.”

The engineer says, “Why am I only seeing this after the system already routed it?”

The operations manager says, “If this is wrong, who owns the call?”

The project sponsor says, “Let’s not slow the rollout with too many controls.”

That last sentence causes more trouble than most AI demos.

Because nobody wants to look anti-innovation. So teams leave the boundaries fuzzy. They tell themselves they will tighten governance later. What that often means is that the workflow goes live before anyone has really decided where judgment still belongs.

What this can look like inside a real company

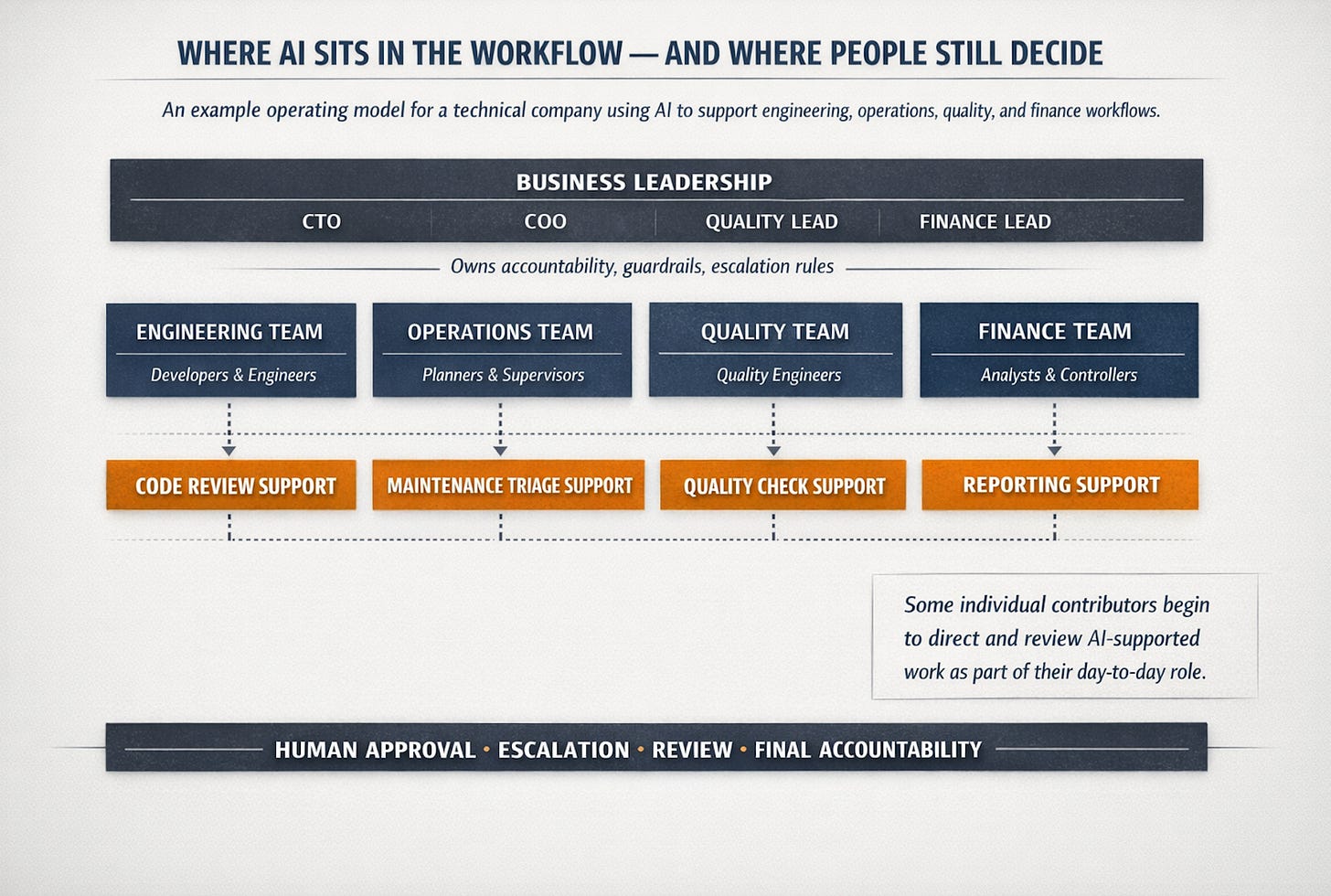

An example org chart for a technical organization where human teams and AI agents work side by side.

Example structure: formal managers still own accountability, but AI agents increasingly support workflows across engineering, operations, quality and finance. In some teams, individual contributors begin to act like frontline leads for digital workers.

Think shopfloor, not software demo

The best mental model for human–AI teamwork3 is not a futuristic office. It is a production line.

On a good line, nobody relies on heroics as the operating model. The flow is designed. Checks happen at known points. Escalation paths exist before the problem arrives. When something drifts out of tolerance, the system makes the issue visible and gets the right person involved.

That is how human–AI teams should work.

Let the system do the repetitive work machines are good at: watching signals, comparing patterns, pulling context, preparing first drafts, checking for missing information, routing the issue, flagging anomalies.

Let people handle the parts that are still stubbornly human: tradeoffs, timing, exceptions, accountability, safety, customer impact and those gray areas where the technically correct answer is not automatically the right business answer.

That split is not about human pride. It is about consequence.

The mistake many leaders make is assuming that because AI can produce an answer, it should also carry more authority. Those are different things. A system can be very useful without being given the keys.

What an AI agent actually is in practice

This is where the conversation often gets sloppy.

An AI agent is not a teammate in the normal sense. It does not own outcomes. It does not absorb blame. It does not understand internal politics, operational history or the fact that one supplier always says “confirmed” two days before missing the date.

What it can do is still valuable.

It can turn a messy input into a clean first pass. It can monitor signals at a scale no person wants to. It can surface patterns that would otherwise stay buried in logs, emails and maintenance notes. It can keep a workflow moving when the work is structured enough.

But here is the line leaders need to respect: speed is not judgment.

And the best early use cases are not just repetitive tasks. They are repetitive tasks with reasonably clean inputs, bounded failure costs and clear review logic.

That is where AI tends to help without creating more risk than value.

What strong leadership looks like now

Good leadership in a human–AI setup is not about who writes the best prompt in the room.

It is about four unglamorous things done well.

1. Clear decision rights

Desicion rights4:

What may the system do on its own?

What may it recommend only?

What always needs human approval?

A maintenance system may suggest likely failure modes. That does not mean it gets to schedule downtime.

A service agent may draft a customer response. That does not mean it gets to make a concession.

A quality workflow may flag suspicious patterns. That does not mean it decides whether a shipment gets blocked.

These are leadership calls, not technical settings.

2. Guardrails that reflect reality

In practice, guardrails5 are simple. What data can the system touch? What systems can it access? Which actions are blocked? What thresholds force human review? What needs an audit trail?

If the answer is vague, the control is vague.

And vague control is how teams end up with automation that looks impressive in a dashboard and quietly creates risk everywhere else.

3. Escalation logic

This is the most underestimated part.

The question is not whether the system is right most of the time. The real question is what happens when it is wrong, late, overconfident or technically correct but operationally clueless.

Who gets pulled in?

How fast?

With what context?

And what stops the same failure mode from repeating next week?

A workflow without escalation logic6 is brittle.

4. Feedback that turns overrides into learning

One of the first signs of a weak rollout is that people start working around the system.

They ignore suggestions. They do the real work off-system. They approve recommendations without reading them. Or one trusted expert becomes the unofficial cleanup crew for every edge case the workflow cannot handle.

That is not just resistance. It is diagnostic information.

A particularly bad sign is the appearance of a shadow workflow7. The official process says the agent is triaging, ranking or drafting. The real process moves back to side chats, spreadsheets and quick calls between the same experienced people. The AI is still there, but now it is mostly generating extra steps.

You see this in real organizations when the pilot looks fine on Tuesday, but on Friday afternoon, when output pressure goes up, everybody quietly bypasses the system and goes back to the one planner, one engineer or one service manager who actually knows where the real constraint is.

That usually tells you something important: the model may not be the main problem. The operating logic around it was never believable enough for people to trust under pressure.

A plant-floor example, but the real version

Take a maintenance team in a factory.

The polished demo version is easy: the AI watches vibration data, reads operator notes, checks service history and proposes a likely diagnosis. Everyone nods. Great.

The real version is less clean.

The agent flags a motor as a likely failure risk on a line that is already behind schedule. The planner knows downtime will hit today’s output. The production manager thinks the model is too cautious. The reliability engineer wants a closer look because the same asset has already produced two false alarms this quarter. Meanwhile, the plant manager is asking why the “smart system” cannot just tell the team what to do.

That is a leadership moment.

Not because the answer is hidden in the model. Because somebody has to decide how authority, risk and timing are balanced.

A serious operating model might look like this: the system can triage and recommend, the planner approves downtime, the reliability engineer reviews repeated-failure patterns, changes to maintenance intervals require approval, high-cost actions trigger escalation and accepted and rejected recommendations are reviewed weekly to see whether the system is learning the right lessons.

Now the workflow is not just faster. It is clearer.

And clarity is worth more than speed in more situations than most people think.

The chance most technical leaders are missing

The biggest upside here is not labor reduction.

That is the shallow version of the opportunity.

The deeper opportunity is that AI can make organizations less dependent on invisible heroics.

A junior engineer gets a stronger first view because the relevant history shows up before they have to ask for it.

A planner spends less time chasing context and more time making tradeoffs.

A service manager sees recurring failure patterns earlier instead of hearing about them customer by customer.

A middle manager spends less time being a human router and more time making actual calls.

That matters especially in industrial and technical businesses, where expertise is unevenly distributed and too much operational continuity still depends on a handful of overloaded people who “just know how things work.”

That is not scalable. More important, it is fragile.

The promise of human–AI teamwork, done right, is not that it removes expertise. It gives expertise better reach.

One thing leaders still underestimate: trust is operational

Leaders often talk about trust as if it were a branding issue or an ethics slide.

It is much more practical than that.

People trust a system when they know when it acts, why it acts, when they may overrule it and what happens after they do. They trust it when the escalation path makes sense. They trust it when the same mistake does not keep showing up wrapped in slightly different wording.

They do not trust it because someone said the model is 92 percent accurate.

Trust in human–AI teamwork lives in boundaries, explanations, review loops (human in the loop8) and the everyday behavior of the workflow.

That is why leadership matters so much here. Leaders decide how trust gets built or destroyed in the real world.

Getting started without theater

Do not start with a grand AI vision statement.

Start with one ugly workflow.

Pick something repetitive, annoying, high-volume and important enough that people will care if it gets better. Maintenance triage. Quality deviation handling. Supplier follow-up. Service case routing. Engineering change requests.

Then map the real version of the work. Not the official process. The real one.

Where does it stall?

Where do people chase missing context?

Where do they re-enter data?

Where do decisions actually get made?

Where do exceptions land?

Where does one experienced person quietly save the day every week?

That last question is usually where the truth lives.

Then make the hard calls. What belongs to the system? What belongs to the human? What needs sign-off? What needs logging? What needs escalation? What needs weekly review?

That is where leadership starts.

Not with AI theater. With workflow honesty.

Final thoughts

The future of leadership is not human versus machine.

It is better judgment, supported by better systems.

The companies that get this right will not be the loudest ones. They will be the ones that quietly remove friction, clarify authority, improve handoffs and stop wasting experienced people on work that should have been structured better long ago.

And some of the people doing that redesign will not have “manager” in their title. They will be developers, engineers and other technical specialists who learn how to direct and review digital workers as part of their normal job.

The old manager asked, “Who owns the task?”

The stronger leader asks, “How should this system work when humans and AI share the job?”

That is the shift.

Done well, it is not the end of leadership.

It is leadership getting more serious again

Sources & further reading

This article draws mainly on a small set of current, high-signal sources on AI adoption, workflow redesign9, management change and workplace impact. McKinsey is the main anchor for the adoption-versus-value gap and the importance of workflow redesign. Microsoft is useful for the rise of “digital labor” and human-agent teams in knowledge work. Deloitte is strongest on the changing manager role and the tension between human and technological work. OECD adds a more grounded workplace lens through case studies and survey work in manufacturing and finance.

McKinsey & Company

The state of AI in 2025: Agents, innovation, and transformation.

The state of AI: How organizations are rewiring to capture value

One year of agentic AI: Six lessons from the people doing the work

Microsoft

Deloitte

OECD

The impact of AI on the workplace: Evidence from OECD case studies of AI implementation

The impact of AI on the workplace: Main findings from the OECD AI surveys of employers and workers

A note on evidence

Most of the management claims in this article are based on survey research, case studies and practitioner analysis rather than hard causal proof. That makes the argument directionally strong, but it should still be read as a practical leadership interpretation, not a law of nature.

Glossary

AI agent: A software system that does more than answer questions or generate text. In the current enterprise sense, an AI agent can use foundation models to carry out complex, multistep work across systems and workflows.

Digital labor: Microsoft’s term for using AI capacity as a new form of workforce support (not as a literal employee, but as on-demand capability that can help teams handle more work).

Human–AI team: A team in which people and AI systems both contribute to the workflow. The most useful way to think about this is not “humans versus machines”, but humans handling judgment and consequence while systems take on more of the repetitive, structured legwork.

Decision rights: A simple but important management idea: who is allowed to decide what. In the context of AI, it means being explicit about what the system may do, what it may only recommend and what still requires human approval. This article uses the term in that practical sense.

Guardrails: The boundaries around an AI-enabled workflow: which data the system may use, which systems it may access, which actions are blocked and which cases force human review. In practice, guardrails are what turn “helpful automation” into something governable.

Escalation logic: The rules for when work must move from the system back to a person. This matters most when the cost of being wrong is high, when the case is unusual or when the system is acting outside its safe boundaries.

Shadow workflow: An unofficial side process that appears when people stop trusting the formal workflow and quietly move the real work back into calls, side chats, spreadsheets or a few experienced individuals. This is a practical term used in the article, not a formal research label. It describes a common failure pattern in operations.

Human in the loop: A setup in which a person must review, approve or override the AI at specific points. In practice, this is often the difference between assistance and delegated authority.

Workflow redesign: Changing the actual flow of work (steps, handoffs, approvals, roles and review points= instead of simply layering AI on top of an old process. In McKinsey’s recent work, this is one of the strongest factors linked to reported AI value.

You address, among other things, two very important points. First, that knowledge work can in principle follow just as clear and methodical a process as the production of a physical product. However, this is unfortunately far from being recognized and accepted yet — probably because the product is not directly visible. AI could lead to this insight finally gaining broader acceptance. Equally, the second aspect — decision-making — also needs and has a clear process, for example as described in the continuum model by Tannenbaum and Schmidt. This too is something we apply inconsistently today, and here as well, the thoughtful use of AI can demand a more consistent way of acting. A very good article — I hope it opens eyes for those it concerns.

I appreciate this because I’ve been thinking about the design work that is needed in organizations and what it means for leaders at all levels and what they need to be successful.